Your AI is a Generic Intern. Here's How LoRA Fine-Tuning Turns It into a Pro.

Quick Summary (TL;DR)

• Custom AI Without the Custom Price Tag: LoRA fine-tuning is a hyper-efficient method to teach a general AI about your specific brand, products, and customers, without the eye-watering cost of retraining a massive model from scratch.

• Massive Cost Savings: Instead of altering billions of parameters, LoRA adds tiny, trainable “adapters.” This drastically cuts down on GPU time and costs, making bespoke AI accessible to more than just tech giants.

• The Efficiency Sweet Spot: Research shows that for most tasks, targeting a single, specific part of the AI model (a module called o_proj) delivers the best balance of performance and speed. It's the ultimate 80/20 rule for AI customization.

—

You did it. You finally integrated a fancy AI into your eCommerce workflow. It was supposed to be your new superpowered intern, churning out brilliant product descriptions and charming customer service replies 24/7. Instead, it sounds like a corporate robot that mainlines thesauruses and has the personality of a dial tone. It doesn't get your brand's edgy humor or your customers' specific pain points.

This is the curse of generic, off-the-shelf AI. It’s trained on the entire internet, so it knows a little about everything but is an expert in nothing—especially not your unique business. You could spend a fortune on a full fine-tuning, which is like sending your intern for a four-year PhD. Or, you could use a smarter, faster, and cheaper method that’s taking the AI world by storm: LoRA fine-tuning.

This guide will break down what LoRA is, why it’s a game-changer for eCommerce, and how you can use its principles to build an AI that feels less like a generic tool and more like your most valuable team member.

What the Heck is LoRA Fine-Tuning, Anyway?

LoRA, which stands for Low-Rank Adaptation, is a technique for efficiently customizing large language models (LLMs). Let's ditch the jargon. Imagine your LLM is a brand-new, high-performance car. Its engine (the base model) is incredibly powerful but generic.

- Full Fine-Tuning is like completely disassembling and rebuilding the entire engine to specialize it for racing. It’s incredibly effective but astronomically expensive and time-consuming.

- LoRA Fine-Tuning is like adding a custom-designed, lightweight turbocharger. You don't touch the core engine. Instead, you bolt on a small, highly-specialized part (an “adapter”) that dramatically boosts performance for your specific needs. It’s cheaper, faster, and you can even swap different turbochargers for different race tracks.

In technical terms, LoRA freezes the LLM's original weights and injects small, trainable matrices into specific layers of the model. This means you're only training a tiny fraction (often less than 0.1%) of the total parameters, which is why it's so efficient.

Why This Is a Game-Changer for Your eCommerce Brand

Okay, cool tech. But how does this actually help you sell more stuff? It comes down to two things: money and competitive advantage.

Stop Burning Cash on GPU Bills: The Efficiency Benefit

Training large AI models is notoriously expensive, requiring massive amounts of computational power (and the electricity bills to match). A recent ablation study by Amazon Science on their Nova 2.0 Lite model explored just how efficient LoRA can be. By identifying the most impactful parts of the model to adapt, they found you could achieve massive performance gains with minimal overhead.

By targeting a smaller, well-chosen subset of modules, you can preserve most of the performance gains with significantly better efficiency. This makes custom AI a practical reality, not just a theoretical luxury.

Get an AI That Actually Gets You: The Customization Benefit

A generic AI might describe your artisanal, hand-poured soy candle as: “This scented candle is a wax-based product designed to produce light and a pleasant aroma when lit.” Thanks, Captain Obvious.

An AI fine-tuned with LoRA on your past product descriptions, customer reviews, and brand style guide would write: “Unwind after a chaotic day with our ‘Midnight Library’ candle. Notes of aged leather, vanilla, and sandalwood transport you to a cozy reading nook, even if you’re just hiding from your inbox.”

See the difference? One is a dictionary definition. The other is a sales engine.

A Peek Under the Hood: The (Simplified) How-To Guide

You don't need to be a machine learning engineer to grasp the core concepts. The Amazon study did the heavy lifting to find the shortcuts. Here’s what they discovered.

Step 1: Know Your AI's Anatomy (The Modules)

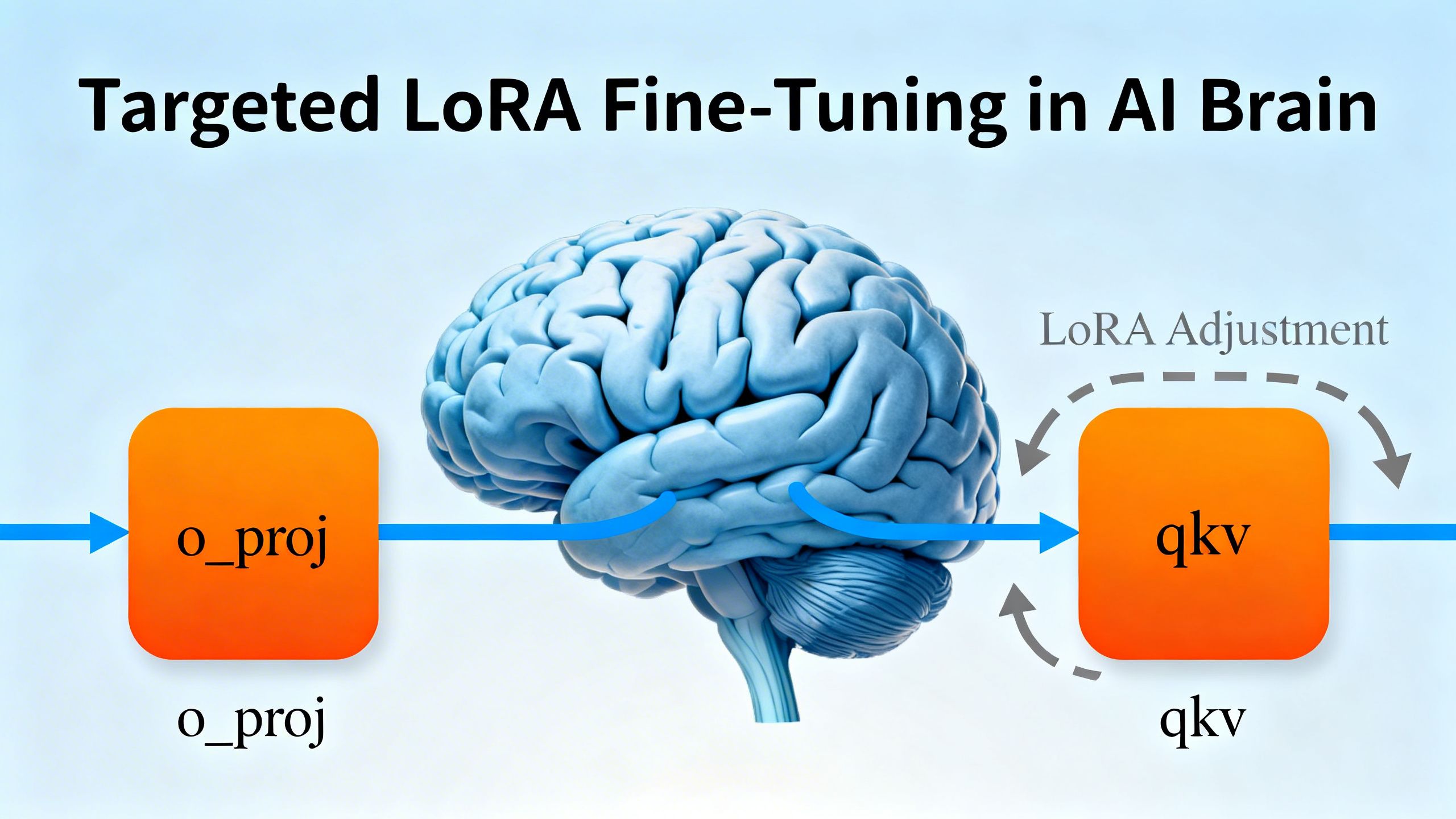

Think of an AI model like a team of specialists. It’s made of repeating blocks, and each block has different components (modules) that do specific jobs. The most important ones are:

qkv(query, key, value): These are part of the attention mechanism, helping the AI decide which words in a sentence are most important.o_proj: This module takes all the insights from the attention mechanism and synthesizes them into a cohesive understanding.fc1/fc2: These are feed-forward layers that help with reasoning and knowledge recall.

Step 2: Find the Golden Ticket: The 'o_proj' Sweet Spot

The researchers tested adapting all these modules, both alone and in combination. The breakout star? The o_proj module.

The o_proj-only configuration demonstrated remarkable consistency, never failing outright on any task and typically performing within a few percentage points of the best configuration.

Key Tip: For most eCommerce tasks—like improving marketing copy or summarizing reviews—starting with an o_proj-only LoRA fine-tuning gives you the best bang for your buck. It’s the most reliable and efficient path to a smarter AI.

Step 3: Go for Gold: Combining Modules for Maximum Power

What if you're doing something really complex, like generating a perfectly structured JSON file from a 100-page supplier report? For these heavy-duty tasks, the study found that a combination of o_proj + fc2 delivered the highest accuracy.

Key Tip: For mission-critical or highly complex tasks where accuracy is everything, using a combination like o_proj + fc2 is worth the modest increase in training time. It gives your AI the extra reasoning power it needs to nail the details.

LoRA Fine-Tuning in the Wild: Real eCommerce Scenarios

Let's move from theory to practice. How can LoRA-tuned models transform your daily operations?

Hyper-Personalized Product Descriptions at Scale

Imagine feeding an AI your entire product catalog, top-performing ad copy, and 5-star customer reviews. With LoRA, you can create a model that instantly generates on-brand, SEO-optimized, and emotionally resonant descriptions for every new product you launch. No more writer's block, no more generic copy.

Customer Service That Doesn't Make People Rage-Quit

Train a chatbot on your entire history of support tickets, FAQs, and help-desk articles. A LoRA-tuned bot can provide nuanced, accurate, and empathetic answers that solve real problems, rather than just pointing users to a generic FAQ page. It knows your return policy, your common shipping issues, and how to handle an unhappy customer with your brand's specific tone.

From Theory to Checkout: Two Mini Case Studies

The Fashion Brand That Taught its AI to Speak 'Gen Z'

- Challenge: A streetwear brand's ad copy was falling flat on TikTok and Instagram. It was too corporate and didn't connect with their target audience.

- Solution: They used LoRA to fine-tune a language model on a dataset of top-performing influencer posts, viral comments from their community, and their internal brand slang guide.

- Results: The new AI-generated copy was indistinguishable from something written by a top creator. Engagement rates on their ads increased by 40%, and the cost per acquisition dropped because the creative was more effective.

The Subscription Box That Predicted Churn with Surgical Precision

- Challenge: A coffee subscription company was struggling with customer churn. Their generic “10% off” coupon sent to canceling customers was doing nothing.

- Solution: They fine-tuned an AI model on their customer data, including purchase history, support interactions, and survey feedback. The goal was to identify subtle patterns that preceded a cancellation. As a true AI agent acting as a data scientist, the model learned to spot behaviors—like a customer repeatedly skipping a month or viewing the cancellation page—and flag them for proactive outreach.

- Results: Instead of a generic coupon, the support team could now offer personalized solutions, like a different roast or a temporary pause. Churn was reduced by 15% in the first quarter.

Common Screw-Ups: How to Avoid Wasting Your Time

LoRA is powerful, but it's not magic. Here are two common pitfalls to avoid.

The Sledgehammer Approach: Targeting Everything, Achieving Nothing

It’s tempting to think that adapting more modules is always better. But the research shows this isn't true. Targeting all modules can be wasteful, increase latency, and sometimes even lead to worse performance. Stick to the proven configurations: o_proj for general use and o_proj + fc2 for complex tasks.

Garbage In, Garbage Out: The Data Quality Problem

Your fine-tuned model will only ever be as good as the data you train it on. If you feed it messy, inconsistent, or low-quality data, you'll get a messy, inconsistent, and low-quality AI. It might even start confidently making things up, a phenomenon known as AI hallucination.

The Missing Piece: Fueling Your Fine-Tuned AI with High-Quality Data

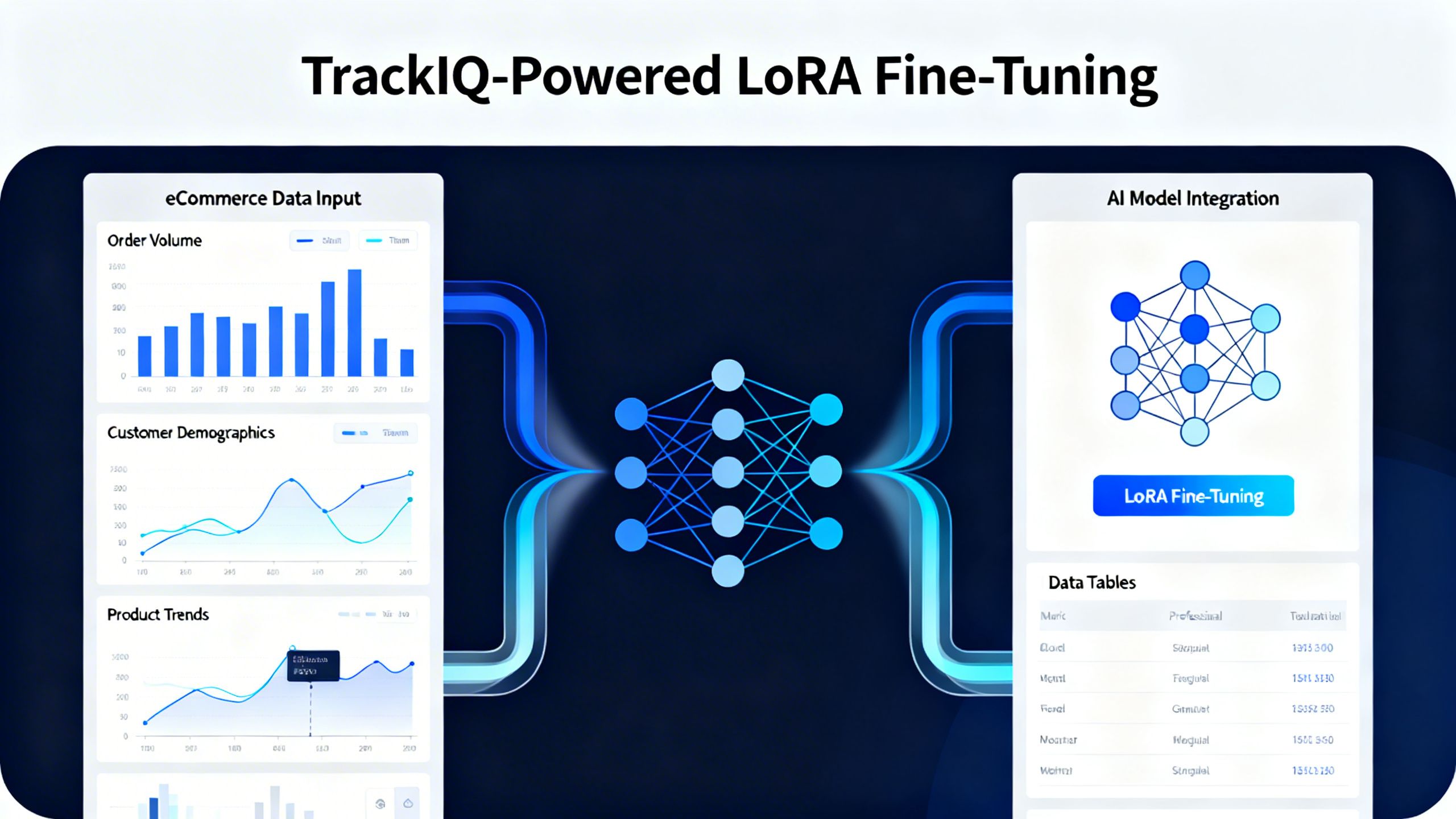

This is where everything comes together. LoRA fine-tuning is the high-performance engine, but clean, structured, and relevant data is the high-octane fuel it needs to run.

This is precisely why a platform like TrackIQ is so critical in the age of AI. You can't just scrape random data and hope for the best. You need a system that can unify, clean, and structure your business data—from sales and inventory to customer reviews and advertising performance.

TrackIQ acts as your data refinery. It takes the raw, chaotic data from your Amazon business and turns it into the pristine fuel required for effective fine-tuning. When you ask your AI a question, you want it to pull from a single source of truth, not a swamp of conflicting spreadsheets. By providing this clean data foundation, TrackIQ ensures that your custom AI learns the right lessons, leading to smarter insights and more profitable actions.

Your Action Plan for Smarter AI

Here are your key takeaways:

- Embrace Customization: Stop settling for generic AI. LoRA fine-tuning is the most accessible and cost-effective way to create an AI that understands your unique business.

- Start with the Sweet Spot: When you begin exploring fine-tuning, remember the

o_projmodule. It's your most reliable starting point for a massive boost in performance with minimal cost. - Prioritize Your Data: The success of any AI project hinges on the quality of your data. Before you even think about fine-tuning, focus on getting your data house in order. A clean, unified data source is non-negotiable.

Conclusion

The era of one-size-fits-all AI is coming to an end. The competitive edge in eCommerce will no longer come from simply using AI, but from using a customized AI that is deeply aligned with your brand, your products, and your customers. LoRA fine-tuning is the technology that democratizes this capability.

It transforms AI from a blunt instrument into a surgical tool, allowing you to craft intelligent systems that don't just follow instructions but anticipate needs and create value. By combining the power of LoRA with a rock-solid data foundation from a platform like TrackIQ, you’re not just building a smarter business—you’re building the future of eCommerce.

—