The End of Silos: How AI in Drug Discovery is Ditching Clunky Models for Conversational Chemists

Quick Summary (TL;DR)

• The Old Way is Broken: Traditional AI in drug discovery relies on multiple, siloed Graph Neural Networks (GNNs), creating an expensive and operationally complex mess for chemists.

• A New Sheriff in Town: A single, fine-tuned Large Language Model (LLM) can now outperform a whole suite of GNNs, unifying molecular property prediction into one conversational interface.

• From Data to Dialogue: This shift doesn't just streamline workflows; it allows scientists to ask why a model made a prediction, turning a black box into a collaborative partner and dramatically speeding up research.

—

Ever felt like you're trying to assemble a puzzle with pieces from ten different boxes? That’s been the reality for medicinal chemists for years. The journey to bring a single drug to market is a brutal 10-15 year marathon that costs, on average, over $2 billion. A huge chunk of that time is spent in the early stages, where scientists wrestle with a tangled web of specialized AI models to predict if a molecule is a potential blockbuster or a dud. This is where the promise of AI in drug discovery meets a harsh reality.

Chemists have been forced to navigate multiple interfaces, stitch together disconnected data, and manually interpret a flood of numbers from different systems. It’s slow, inefficient, and feels less like cutting-edge science and more like digital plumbing. But what if you could replace that entire clunky toolkit with a single, brilliant assistant that not only gives you the answers but explains its reasoning? A new approach is doing just that, and it’s poised to change everything.

What is AI in Drug Discovery? (The GNN vs. LLM Showdown)

At its core, AI in drug discovery uses machine learning to predict how a molecule will behave in the human body. For years, the undisputed champions of this field were Graph Neural Networks (GNNs). Think of GNNs as hyper-specialized, workhorse calculators. You feed them a molecule's structure, and they excel at predicting a specific property with high accuracy. The problem? You need a different GNN for almost every property, leading to model sprawl.

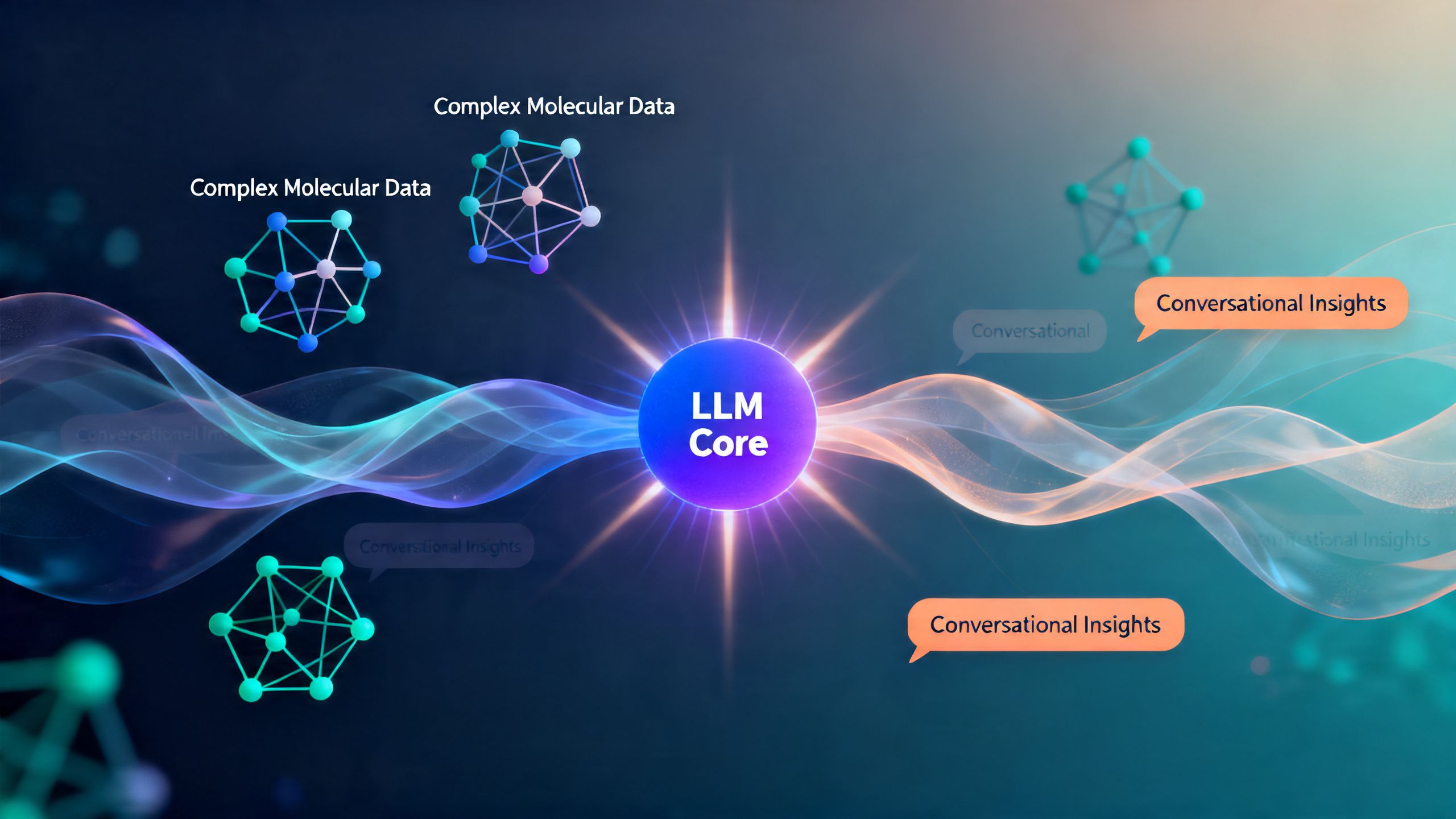

Now, enter the Large Language Models (LLMs), the same tech behind ChatGPT. Initially, off-the-shelf LLMs were terrible at this job, falling flat compared to GNNs. But researchers discovered that with the right training, a single LLM could learn to do the work of multiple GNNs and more, transforming the process from a series of isolated calculations into a unified, interactive conversation.

Why a Unified AI Model is a Game-Changer

The shift from a dozen GNNs to one LLM isn't just a minor upgrade; it's a fundamental change in how drug discovery can be done. It’s about moving from complexity to clarity.

From Model Sprawl to Streamlined Workflow: Slashing Complexity & Costs

Imagine your IT department having to build, train, and maintain a separate, custom tool for every single question you ask. That’s the GNN reality. It's an expensive, operationally nightmarish process. A fine-tuned LLM consolidates this entire infrastructure into one model.

“Without a unified AI solution, chemists had to navigate multiple models to evaluate a single molecule — piecing together disconnected results across different interfaces, data formats, and failure modes.”

This consolidation means less infrastructure, lower maintenance costs, and a dramatically simplified workflow. When a new property needs to be predicted, you don't build a new model from scratch; you just incrementally fine-tune the existing one.

From Numbers to Narratives: Unlocking Conversational Science

GNNs give you a number. An LLM can give you a story. This is the qualitative leap that changes the game. Instead of just getting a prediction, a chemist can now ask the model for its reasoning or even ask it to suggest molecular modifications to achieve a desired outcome.

This turns the AI from a passive prediction tool into an active collaborator. It’s the difference between using a calculator and brainstorming with a fellow expert. This interactive experience is what we see as the ideal next step for AI-assisted drug design.

The Amazon & Nimbus Playbook: How to Train a Genius AI Chemist

So how do you turn a generalist LLM into a chemistry savant? Amazon and Nimbus Therapeutics laid out a clear, two-step process that transformed a standard Nova 2 Lite model into a specialized powerhouse.

Step 1: Foundational Knowledge with Supervised Fine Tuning (SFT)

First, you have to teach the model the language of chemistry. During SFT, the LLM was fed over 55,000 molecules, each labeled with experimental measurements across 11 key properties. This stage is about building foundational knowledge—teaching it to understand chemical structures (represented in a notation called SMILES) and the basic relationships between a molecule and its properties. It’s the AI equivalent of hitting the textbooks.

Key Tip: SFT is essential because domain-specific tasks are far outside a general LLM's pre-training data. You can't expect it to understand SMILES strings without this foundational education.

Step 2: Refining Judgment with Reinforcement Fine Tuning (RFT)

Once the model has the book smarts, it's time to develop predictive judgment. RFT is like giving the AI practice problems and providing feedback. The model makes predictions, and a reward system guides it toward minimizing errors. This is especially critical for properties with limited data, where SFT alone struggles. RFT helps the model learn the subtle interdependencies between properties—for example, how lipophilicity affects permeability.

Key Tip: The reward system is everything. The team found that using Huber loss—a metric that provides a meaningful signal on large errors without overreacting to small ones—was the key to effective learning.

Step 3: Prompting for Success

Finally, the model was given a comprehensive “system prompt” that acted as its cheat sheet. This prompt included core chemistry knowledge and detailed definitions of the 11 properties of interest, including their expected value ranges. This ensures the model operates within the correct scientific context, grounding its predictions in established principles.

AI in Drug Discovery: Beyond the Hype

This isn't just a theoretical exercise. The fine-tuned LLM was put to the test on predicting properties critical to a drug's success or failure.

Predicting Critical Molecular Properties: The Big Three

The model was trained to predict 11 properties across three vital categories:

- Lipophilicity: Determines if a molecule can cross biological membranes. Fundamental for absorption.

- Permeability: Measures how easily a drug enters the bloodstream.

- Clearance: Determines how quickly the body eliminates a drug. Too slow is toxic; too fast is ineffective.

These properties have complex interdependencies, which is where a unified model shines.

Unlocking Intramodel Learning: The Hidden Superpower

Siloed GNNs struggle to capture the fact that these properties influence each other. But during RFT, the LLM begins to learn these connections organically. It understands that lipophilicity affects permeability, and both can inform metabolism predictions. This holistic understanding leads to more robust and reliable predictions, as the model isn't just pattern-matching; it's developing a deeper, more integrated