The Code of Life: How AI/ML Antibody Design is Revolutionizing Medicine

Quick Summary (TL;DR)

• The Problem: Discovering therapeutic antibodies is incredibly slow and expensive, a process that hasn't changed much in 40 years. Existing AI models lacked reliable data to improve.

• The Breakthrough: AWS and Johns Hopkins University created the Antibody Developability Benchmark, the largest, most diverse public dataset for training and testing AI models for antibody design.

• The Impact: This benchmark will drastically shorten drug discovery timelines, reduce costs, and build trust in AI-driven predictions, leading to faster development of new medicines.

—

Remember the frantic, global race for a COVID-19 vaccine? It was a stark reminder of how slow and arduous developing new medical treatments can be. For decades, discovering therapeutic antibodies—the tiny proteins that are workhorses of modern medicine—has been a process bogged down by time and astronomical costs. Scientists have been flying half-blind, with immense potential in AI but a critical lack of high-quality data to guide it.

What if you could teach a machine the language of biology to design perfect antibodies on the first try? That's the promise of AI/ML antibody design. But like any great student, AI needs a great teacher—and in the world of data, the teacher is the dataset. This is the story of a groundbreaking collaboration between AWS and Johns Hopkins that finally built the ultimate textbook for AI in drug discovery, and it holds surprising lessons for anyone in the business of data—including eCommerce sellers.

What Exactly is AI/ML Antibody Design?

Think of proteins as sentences and amino acids as the letters. AI/ML antibody design uses advanced algorithms, similar to the large language models (LLMs) that power ChatGPT, to understand and write in this biological language. These models, called protein language models (pLMs), can predict how an antibody will behave based on its amino acid sequence.

Instead of years of trial-and-error in a wet lab, scientists can now ask a model: “If I change this 'letter' in the protein 'sentence,' will the resulting antibody be more stable and effective?” The AI can then generate and rank thousands of potential designs in silico (on a computer), identifying the most promising candidates for real-world testing. It’s about shifting from discovery by chance to design by intention.

Why This Breakthrough in AI/ML Antibody Design Matters

For 40 years, the process has been stuck in low gear. This new development slams the accelerator. The creation of the Antibody Developability Benchmark by AWS and Johns Hopkins isn't just an academic exercise; it's a paradigm shift with profound real-world consequences.

Benefit 1: Drastically Accelerating Drug Discovery

The traditional path to approving a therapeutic antibody is a marathon. The new benchmark dataset acts as a supercharger. By providing a reliable way to validate AI models, it allows researchers to fail faster and cheaper on a computer, not in a multi-million dollar lab experiment.

“Trust in the predictions made by these models must be grounded in evaluations against experimental data that is sufficiently large and diverse,” explains Luca Giancardo, an AWS applied scientist. This trust allows researchers to move with unprecedented speed.

Benefit 2: Slashing Prohibitive Costs

Drug discovery is notoriously expensive, with costs often running into the billions. A huge chunk of that expense comes from experimental dead ends. By using AI models trained on this new benchmark, pharmaceutical companies can vet antibody candidates computationally, weeding out the ones destined to fail due to issues like poor stability or solubility before they invest heavily in lab resources.

This cost reduction isn't just about saving money for big pharma; it democratizes discovery, potentially enabling smaller labs and startups to innovate in a space once dominated by giants.

A Guide to Building a Foundational AI Benchmark

So how did AWS and Johns Hopkins build a dataset 20 times more diverse than anything that came before it? They followed a meticulous, three-step process that offers a masterclass in building a reliable data foundation.

Step 1: Identify and Address the Data Gap

The first step was recognizing the core problem: existing public datasets were garbage-in, garbage-out. They were too small, too focused on a single antibody type, or biased toward successful, clinically approved antibodies. This “survivorship bias” is useless for training an AI to predict failure—which is just as important as predicting success.

Key Tip: The team knew they needed data that represented the real sequence space, including both favorable and unfavorable outcomes. A dataset of only winners can't teach you how to avoid losing.

Step 2: Curate a Massively Diverse Dataset

To solve the data gap, they built a dataset of unprecedented scale and heterogeneity. The Antibody Developability Benchmark includes:

- 50 seed antibodies spanning four different structural formats (IgG, VHH, scFv, etc.).

- 42 distinct antigen targets, ensuring the models learn generalizable principles, not just solutions for one specific disease.

- Systematic mutation strategies, including changes guided by protein language models themselves.

This wasn't just about quantity; it was about deliberate, structured diversity to cover the vast landscape of antibody engineering.

Key Tip: True intelligence requires exposure to a wide range of scenarios. By including different formats and targets, the team ensured their benchmark would be useful for a broad spectrum of therapeutic challenges.

Step 3: Engineer Variants and Validate with Ground Truth

Finally, data is just theory until it's tested in the real world. The team systematically engineered variants for each seed antibody and then—crucially—validated their properties in wet-lab experiments. This provided the “ground truth” that was missing from other public benchmarks.

They even included “bad examples that have a fighting chance,” as Giancardo put it. These aren't obvious failures but tricky edge cases that truly test an AI's predictive power. This rigorous validation is what turns a simple dataset into a trustworthy benchmark.

Best Practices in AI-Driven Biological Design

This project wasn't just about collecting data; it was about establishing new best practices for applying AI to complex scientific problems.

The Principle of Deliberate Heterogeneity

Most datasets are clean. They contain successful examples. The Antibody Developability Benchmark is different. It intentionally includes engineered variants that are predicted to be less stable, less pure, or more prone to aggregation. Why? Because an AI model must learn what not to do.

“This range is essential for training and evaluating machine learning models, which require balanced label distributions and exposure to the failure modes they are intended to predict and avoid,” Giancardo notes. It’s the difference between a student who only sees 'A+' papers and one who learns from red-penned drafts.

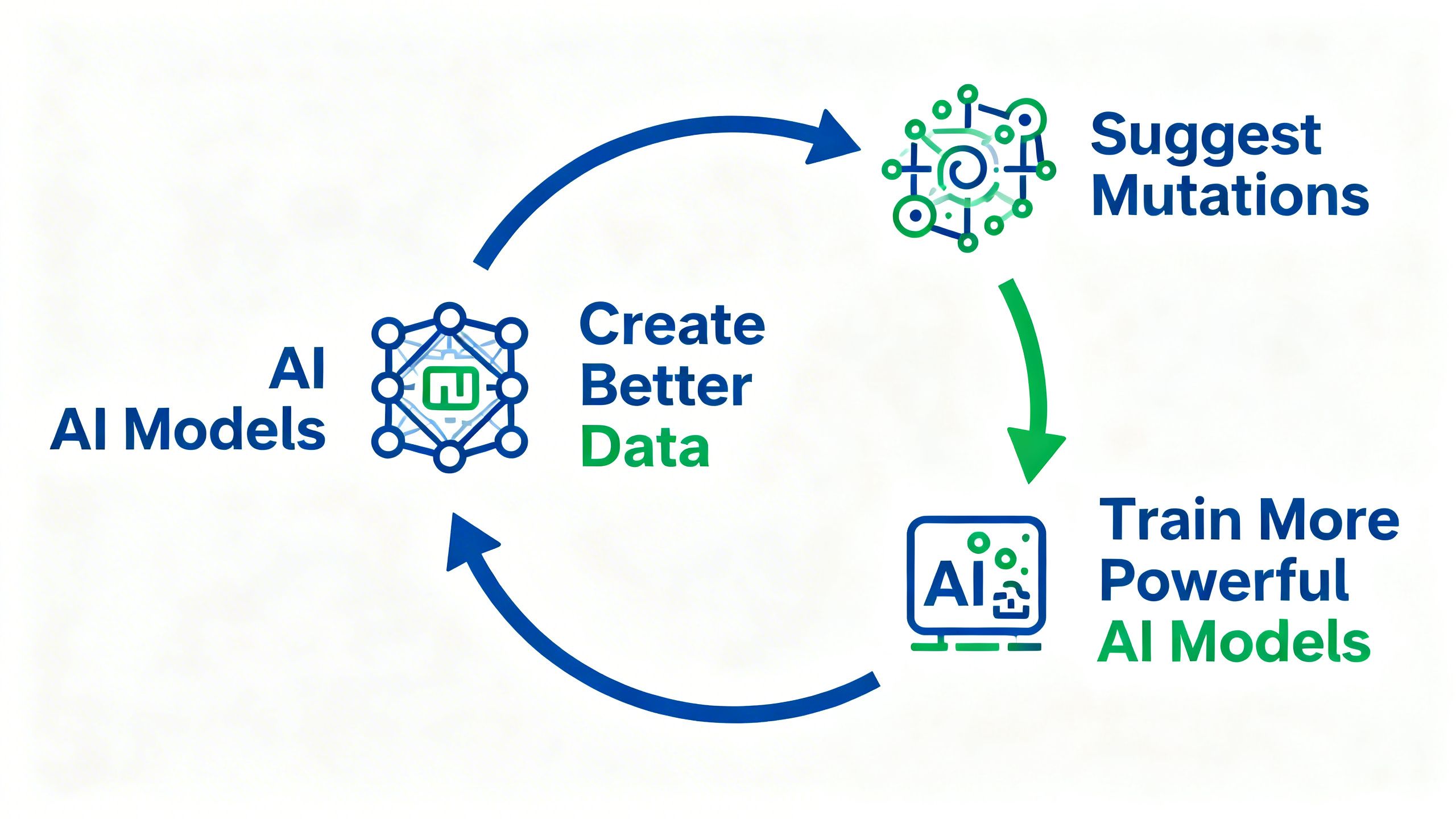

Leveraging Protein Language Models (pLMs)

The team used pLMs not just as a subject of study but as a tool for creating the dataset itself. By using AI to guide the mutation strategy, they could explore the protein sequence space more intelligently. It’s a virtuous cycle: use AI to generate better data to train even better AI.

Real-World Application: The AWS & Johns Hopkins Collaboration

This entire project serves as a powerful case study in solving grand challenges through collaboration and a commitment to data integrity.

The Challenge: A Bottleneck on Innovation

For years, AI researchers were developing exciting new models for protein design, but progress was stalled. As Johns Hopkins professor Jeffrey Gray noted, his own lab’s benchmarks showed that existing models couldn't reliably predict critical features. Without a common, reliable yardstick, it was impossible to know which models were actually better. The field was crowded with tools but lacked a way to measure them.

The Solution: The Antibody Developability Benchmark

The benchmark provides that universal yardstick. For the first time, researchers can confidently answer, “Which model is better suited for our purposes?” by testing them against a massive, diverse, and validated dataset. This was achieved through a siloed, “zero-shot inference” approach where the Hopkins team ran models and the AWS team compared the results against the benchmark data, ensuring no data leakage and validating the benchmark's integrity.

Why TrackIQ Matters: The Lesson of Data Integrity

So, what does AI-driven antibody design have to do with selling on Amazon? More than you'd think. The core challenge solved by the Antibody Developability Benchmark is one of data chaos. Researchers were drowning in unreliable, biased, and siloed information, making it impossible to get clear answers.

This is the exact problem that plagues eCommerce sellers and agencies. You're buried under a mountain of CSVs, screenshots, and pivot tables from Seller Central, ad consoles, and various other tools. The result? You spend hours piecing together reports that are outdated the moment they're finished and still don't give you a clear answer.

The principle is the same: to make smart decisions, you need a single source of truth built on reliable, unified data.

This is where a platform like TrackIQ becomes essential. It was built to solve the data chaos problem for Amazon sellers. Instead of wrestling with messy data, TrackIQ provides a pristine, unified view of your business. It acts as your data refinery, turning raw information into the fuel for growth.

Just as the benchmark allows scientists to ask a question and get a real answer, TrackIQ’s AI-powered agent lets you do the same for your business. You can ask questions in plain English and get immediate, data-backed insights from your actual Amazon data—no guesswork or hallucinations. It’s about replacing hours of manual work with seconds of clarity.

Common Pitfalls to Avoid in Data-Driven Work

The journey to create the antibody benchmark also highlights common mistakes that are just as relevant in business as they are in science.

Pitfall 1: Trusting Biased or Incomplete Data

Relying on datasets that only show successful outcomes (like clinically approved antibodies or your best-selling ASINs) gives you a dangerously incomplete picture. You can't learn how to mitigate risks or fix underperforming products if you only study your winners. You need to understand the full spectrum of performance.

Pitfall 2: Ignoring the 'Bad' Data

The AWS team made a point to include unfavorable—but not obviously wrong—outcomes. In eCommerce, this is equivalent to analyzing not just your 1-star reviews, but your 3-star reviews. They often contain the most valuable, nuanced feedback for improvement. Ignoring this “bad” data means you’re missing critical opportunities to improve.

Advanced Strategy: Zero-Shot Inference for Unbiased Validation

One of the most sophisticated aspects of the AWS/JHU collaboration was their use of “zero-shot inference.” The Hopkins team tested existing AI models without ever giving them access to the benchmark dataset. The AWS team then compared those predictions against the benchmark's ground-truth results.

This created a double-blind validation process, ensuring the benchmark's integrity was beyond question. For businesses, this is a powerful lesson: always test your assumptions and tools against a clean, independent source of truth before you bet your business on them.

Conclusion: The Future is Built on Better Data

The launch of the Antibody Developability Benchmark is more than a scientific achievement; it's a testament to a universal principle: progress, whether in medicine or in business, is built on a foundation of clean, reliable, and comprehensive data.

Here are the key takeaways:

- Garbage In, Garbage Out: The quality of your AI and your decisions is capped by the quality of your data. Investing in a solid data foundation is the highest-leverage activity you can do.

- Embrace the Full Spectrum: Don't just study your successes. Your failures, and even your mediocre results, hold the most valuable lessons for improvement.

- Demand a Single Source of Truth: Whether you're designing antibodies or managing an Amazon store, data chaos is the enemy of progress. Eliminate it by unifying your data into a single, trustworthy platform.

For scientists, the future of medicine just got a lot brighter. For eCommerce professionals, this story is a powerful reminder to stop wrestling with spreadsheets and start demanding clarity. Optimizing your business starts with optimizing your data—and tools like TrackIQ are built to do exactly that.

—