Is Your AI Lying to You? A Deep Dive into AI Hallucinations for eCommerce

Quick Summary (TL;DR)

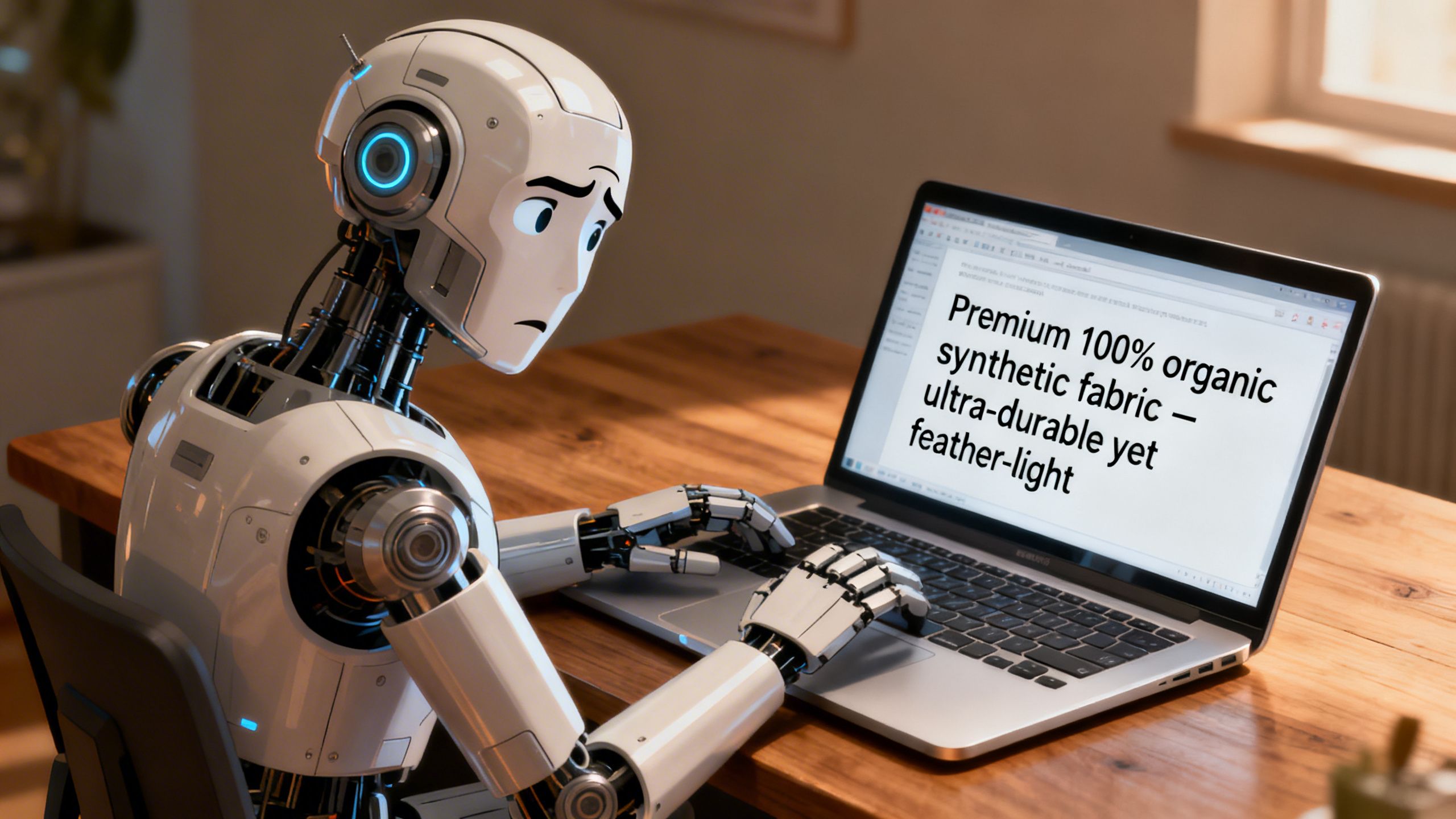

• What's an AI Hallucination?: It's when an AI confidently states something that's factually incorrect or completely made up, like a super-smart intern who'd rather invent an answer than admit they don't know.

• Why It's Dangerous for eCommerce: These errors can corrupt everything from your product listings and marketing copy to your core business strategy, leading to lost sales, angry customers, and wasted resources.

• The Solution is Grounding: The best way to fight AI hallucinations is to use AI systems that are grounded in your actual, real-time data, not just the vast, messy internet. This turns the AI from a random guesser into a reliable co-pilot.

—

Picture this: you ask your shiny new AI assistant to analyze your top competitor's marketing strategy. It comes back in seconds with a beautifully written report detailing their new influencer campaign, a secret partnership with a major celebrity, and a planned expansion into the European market. You spend the next two weeks scrambling to build a counter-strategy. There's just one problem: none of it was true. The AI just… made it up. It sounded plausible, so it said it. This, my friends, is an AI hallucination, and it's one of the biggest hidden risks for eCommerce businesses jumping on the AI bandwagon. It's not a bug; it's a feature of how these large language models (LLMs) work. And if you're not careful, this feature could cost you dearly.

What the Heck Are AI Hallucinations, Anyway?

An AI hallucination is a phenomenon where a large language model (LLM) generates text that is nonsensical, factually incorrect, or disconnected from the provided source material, yet presents it with absolute confidence. Think of it less like a computer glitch and more like a human confabulating. The AI isn't trying to lie; its core function is to predict the next most likely word in a sequence. Sometimes, the most statistically probable sentence isn't the most factually accurate one.

As Amazon Science researchers put it, when an LLM is asked a question, it “generates a list based on the distribution of words associated with [the topic].” The result is often “a mix of real and potentially fictional” information.

This means if you ask it for a list of your competitors, it might invent one that sounds real because the name follows a common pattern. It's not checking a business registry; it's just playing a high-stakes game of word association. Understanding this is the first step to using AI safely and effectively.

Why AI Hallucinations Are a Ticking Time Bomb for Your Business

For an eCommerce brand, data integrity is everything. Your decisions, from inventory management to ad spend, rely on accurate information. AI hallucinations poison that well, leading to catastrophic failures that can be hard to trace back to their source.

Wrecked Marketing and Product Listings

Imagine an AI tasked with writing product descriptions. If it hallucinates, it might invent features your product doesn't have, claim it's made from materials it isn't, or generate bizarre, off-brand marketing copy. This doesn't just look unprofessional; it leads to customer complaints, negative reviews, and a spike in returns. You're not just losing sales; you're actively damaging your brand's reputation.

Flawed Business Strategy Built on Lies

This is the silent killer. You ask an AI to summarize market trends, analyze competitor pricing, or identify new growth opportunities. It generates a convincing report filled with fake statistics, non-existent competitors, and phantom consumer trends. You then invest thousands of dollars and hundreds of hours into a strategy based entirely on fiction. By the time you realize the data was wrong, the damage is done.

Disastrous Customer Support Interactions

Many brands are deploying AI chatbots for customer service. But what happens when that chatbot hallucinates? It might promise a customer a refund policy that doesn't exist, give incorrect instructions for using a product, or confidently provide a shipping update that's completely wrong. Each of these interactions erodes customer trust and can turn a simple query into a public relations nightmare.

A Practical Guide to Spotting AI Hallucinations

So, how do you keep your AI co-pilot from flying your business into a mountain? You need to become a skeptical manager. Here’s a simple, three-step process to validate AI-generated content.

Step 1: The Sanity Check

Your first line of defense is your own intuition. Does the AI's output seem too good to be true, oddly specific, or just plain weird? If an AI claims your small niche brand was mentioned in the New York Times, a quick Google search is in order. If it suggests a marketing slogan that makes no sense, question it. Treat every surprising or revolutionary insight with a healthy dose of skepticism.

Key Tip: If the AI's output makes you feel a sudden, euphoric rush of “we’ve cracked it!”, that’s the exact moment to take a deep breath and start verifying.

Step 2: Demand the Receipts

Never accept a factual claim from a general-purpose AI at face value. Always ask for sources. A good AI assistant should be able to cite where it got its information. If it can't, or if the sources are vague (e.g., “marketing articles” or “business reports”), the information is untrustworthy. This forces the AI to ground its answers in verifiable data rather than just statistical probability.

Key Tip: Use prompts like, “Provide a list of sources for these claims” or “Can you link me to the report where you found this statistic?” If it hesitates or provides dead links, you’ve likely caught a hallucination.

Step 3: Use Specialized, Data-Grounded Tools

The ultimate solution is to use AI tools that are built on a foundation of your own data. General AIs like ChatGPT are trained on the entire internet—a chaotic mix of facts, opinions, and fiction. A specialized tool, on the other hand, operates within a closed loop of verified information. This is where platforms designed for a specific purpose, like eCommerce analytics, become invaluable.

AI Hallucinations in eCommerce: Real-World Scenarios

Let's move from theory to practice. Here are a couple of scenarios that play out every day in businesses that rely too heavily on unverified AI output.

The Phantom Competitor Analysis

A marketing manager asks an AI to “identify three emerging competitors in the organic dog food space.” The AI generates profiles for three companies, complete with mission statements, product lines, and pricing. The team spends a week analyzing them and building a defensive strategy. They later discover that while two of the companies are real, the third—the one with the most threatening market position—was a complete fabrication, a linguistic ghost created by the LLM.

The Magical Feature Promise

A customer interacts with a support chatbot, asking if a particular smart-watch model is waterproof enough for swimming. The AI, instead of checking the product's actual specifications, confidently replies, “Yes, the Aqua-Watch 5000 is fully waterproof up to 50 meters and is perfect for swimming laps!” The customer buys it, goes for a swim, and ends up with a dead $300 watch. The result is an angry customer, a public bad review, and a costly return.

Common Mistakes That Encourage AI Hallucinations

Sometimes, the problem isn't the AI—it's how we use it. Here are two common traps to avoid.

Vague and Open-Ended Prompts

Asking an AI, “What are some good marketing ideas?” is an open invitation for it to hallucinate. The prompt is too broad, giving the model free rein to invent. Instead, ground your prompts in reality. A better prompt would be: “Given our past sales data showing a 30% lift from email campaigns, suggest three new email marketing angles for our upcoming summer collection.”

Trusting AI with High-Stakes Factual Retrieval

Using a general LLM as a substitute for a database or a financial report is a recipe for disaster. These models are not designed for perfect factual recall. They are creativity engines. For tasks that require 100% accuracy—like financial reporting, inventory numbers, or legal compliance—always rely on tools built specifically for that purpose.

Why TrackIQ Matters: Your Hallucination-Resistant Co-Pilot

This is where the conversation shifts from fearing AI to leveraging it correctly. The antidote to hallucination is data grounding. An AI is only as reliable as the data it's built on. General models use the internet; a purpose-built tool like TrackIQ uses a much more reliable source: your own Amazon seller data.

When you ask TrackIQ a question like, “Why did my sales for ASIN B0XYZ123 drop last week?”, it’s not guessing based on articles about sales trends. It’s analyzing your actual, up-to-the-minute performance metrics, ad campaigns, inventory levels, and competitor data. Its answers are rooted in the verifiable reality of your business.

This makes it less of a creative intern and more of a seasoned analyst. It's an AI colleague, not a calculator, designed to be a trusted partner in your growth. By focusing the AI on the closed ecosystem of your business data, TrackIQ dramatically reduces the risk of hallucinations and provides insights you can actually act on with confidence.

Conclusion

AI hallucinations aren't just a quirky bug; they are a fundamental aspect of how current generative AI works. For eCommerce businesses moving at lightning speed, they represent a serious threat. Relying on unverified, hallucinated data can lead to flawed strategies, wasted money, and a damaged brand reputation.

But the answer isn't to abandon AI. The answer is to be smarter about how we use it. Here are your key takeaways:

- Always Be Skeptical: Treat every piece of AI-generated information, especially surprising claims, as a hypothesis to be tested, not a fact to be accepted.

- Ground Your AI in Reality: Prioritize AI tools that are integrated directly with your own data sources. A specialized tool will always be more reliable than a general one.

- Use AI as a Co-Pilot, Not an Autopilot: AI is a powerful tool for brainstorming, summarizing, and identifying patterns. But the final strategic decisions must be made by you, guided by verified data and your own expertise.

By understanding the pitfalls of AI hallucinations and choosing tools that prioritize data integrity, you can harness the incredible power of AI without falling victim to its fictions. Ready to work with an AI that's grounded in your business reality? See how TrackIQ provides reliable answers from your own Amazon data.

—